Today was April Fools’, and in line with our long tradition of prankery, we pretended that we had invented an Artificial Intelligence algorithm for violin expertise. This ‘algorithm’, according to our prank, could recognize different parts of a violin, predict its age, and tell us where it was made. The joke was a bit misleading … but it actually wasn’t so far from the truth.

Yes, of course a computer can never replace human expertise. But the point of the joke was to show how intelligent software can help us categorize and analyze photographs in ways that support and strengthen our work as experts.

Today, as your image uploads came raining in, our team was on standby behind the curtain. We looked at every photo submitted and gave a quick evaluation. At first our answers were basic (c. 1920, German, etc) and later, as the ruse dissolved, they became more capricious (coefficient of Italianity … or was that Italinanity?). You can see the submissions and our responses here. We didn’t get them all right, but responding to an average of five submissions per minute was a challenge.

Intelligent software can help us categorize and analyze photographs in ways that support and strengthen our work as experts.

Responses from the phoney AI ‘algorithm’. Over 500 images were submitted in under two hours, meaning we responded to an average of five submissions per minute.

If the photos are good enough, an expert can tell 80% of what is important about a violin without seeing it in person. Of course, the last 20% is the most important part of expertise. Nothing can replace seeing an instrument in person, holding it in your hands, and making a judgement based on years of experience and careful study. No responsible expert would give an attribution without seeing the instrument in person, but most experts can give a preliminary opinion on the basis of photographs.

Two years ago in Tainan, I was chatting with the curator of the Chi-Mei collection, Dai-Ting Chung, about the future of expertise. Dai-Ting asked this question: if a human can do 80% of expertise just from looking at a photograph, what percentage could be done by a machine? Can we train a computer to do some of the same work that humans do when studying a violin? In his ever-knowledgeable, ever-optimistic manner, he assured me this will happen, it’s only a question of time.

What is Artificial Intelligence?

Artificial intelligence is everywhere. It drives the Internet and controls the apps we spend our lives on. It suggests music, movies, and advertisements based on what we’ve enjoyed in the past. It can play chess and Go better than the grandmasters and assess loan and insurance risks better than armies of professional analysts. Soon AI will drive our cars, manage our investments, and diagnose diseases. Artificial Intelligence is a crucial subject which will affect just about every aspect of our lives. Of particular interest to the arts is the branch of AI known as Computer Vision, which, I believe, will have some very interesting applications in the world of instrument expertise.

Late last century, Artificial Intelligence research split into two camps: the ‘rules-based’ and the ‘neural-network’ approaches. The ‘rules-based’ approach tried to make machines intelligent by giving them rules: if A, then B. This approach worked well for basic problems with limited possible outcomes but it broke down as the complexity of a problem increased. To train a computer to recognize a cat in an image, a rules-based algorithm would say ‘if the image contains two pointy ears over a round face with long whiskers and a pink nose, there’s probably a cat in the picture’. But this doesn’t stop the computer from thinking leopards and cheetahs are also cats – which they are, biologically, but for the purposes of teaching a computer, or a human, to recognize a cat, this distinction is crucial.

The ‘neural-network’ strategy took a different approach. Instead of giving the computer a system of rules, computer scientists fed computers heaps and heaps of data and let the computer train itself to recognize patterns and identify discrepancies. Much like a human brain learns about the world by experiencing it, a computer, so the thinking goes, can learn by being fed enough raw data about a particular issue.

Computer Vision uses neural-networks and deep-learning to teach computers to classify objects in images. To train a computer to tell the difference between a cat and a dog, programmers feed that computer hundreds of thousands of images of cats labeled as cats and of dogs labeled as dogs. By the time the neural-network has processed and consumed all that data, it can accurately predict which image contains a cat or a dog, and with some additional guidance it can tell you which is a Border Collie, which is a Spaniel, and which is a Labrador. Have you ever wondered how Google image search works? Or how the faces of your friends are automatically tagged on images you upload to Facebook? Machine learning through Computer Vision has taught computers to see and analyze images, just like humans do, perhaps even better than humans do.

Computer Vision has taught computers to see and analyze images, just like humans do, perhaps even better than humans do.

So how does this apply to violins?

Throughout history, the best violin experts are the ones who have seen the most instruments, kept the best notes, and worked hard to categorize the violins they have encountered. The more instruments you see and the more you study them, the better the expert you become.

This morning, there was no AI, or at least none like we promised. The real purpose of today’s gag was to bring awareness to this technology and to provoke a discussion of the implications it will have on the future of stringed instrument expertise.

Tarisio has three AI initiatives in progress:

1) The parts detector

In 2019 Tarisio began developing a ‘parts-detector’ together with computer scientist Benoît Fayolle. Using neural-network and deep-learning techniques, Benoît has built an app that can identify the various components of a violin and output them as crops of the original image. In next week’s Carteggio, Benoît will explain his methods but you can experiment with a beta version of the app here: https://strip.tarisio.com/. Be sure to use an image which is straight on and not taken from an oblique angle.

The STRIP app evaluates images and categorizes the parts it “sees”.

This may sound boring and nerdy and not particularly relevant but bear with me here… Most people use the Cozio archive to view authentic examples of known makers. Let’s say you have a violin in front of you that you suspect might be a Pressenda: chances are you will go to Cozio, search for Pressenda, and browse through the examples. But what if you could filter those examples to show only the features that you need to see? Soon, you’ll be able to filter for images of Pressenda sound holes and the archive will show cropped images all at the same size and resolution. Or perhaps you want to see Stradivari scrolls from the period 1720-1730, and with just a few clicks, the filtered, cropped images will display for you to compare.

Soon the Cozio archive will allow you to filter for specific instrument parts.

2) Back analysis

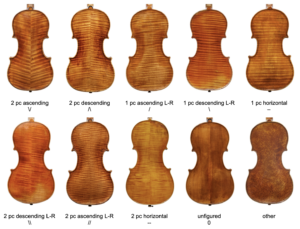

Most violin backs are made of maple; most are made in one or two pieces; and most have horizontal patterns in the wood we refer to as flame. Considering the various configurations of one and two piece backs with sloping or horizontal flame, there are ten possible orientations:

The ten possibilities for flame orientation on the backs of instruments.

We have also trained the algorithm to categorize images of backs according to the orientation of flame. This may seem technical but categorizing an instrument by the configuration of flame on its back instantly divides the archive into ten discrete groups. This allows a user to filter results and quickly refine a selection. Let’s say you want to know if a certain Vuillaume is featured in the Cozio archive. Instead of scrolling through all 1,168 Vuillaume violins, you can quickly filter for only those with two piece backs with flame descending from the center joint. The true value of this feature, like the parts detector above, is not as a stand-alone app but how it can be integrated into the archive. Looking for a Pressenda violin with a two piece back with ascending flame? We can filter those results for you.

The software analyzes the vectors of flame to determine if the back is in one piece or two and whether the flame is ascending or descending.

3) Mapping the archive

There are hundreds of thousands of images in the Cozio archive. For various reasons, including copyright restrictions and owner discretion, the public section of the archive contains only a fraction of what’s available in the complete database.

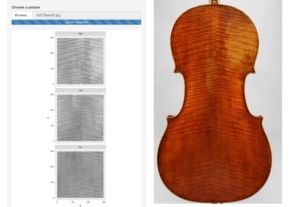

The next phase of our research is to create a visual index of every instrument in the archive. Using the back of instruments – as this is the area which is not covered by the fingerboard, tailpiece and bridge – we will create a map of shapes, patterns and textures. This map will work like a fingerprint and will enable us to index the distinguishing features of each instrument and assign values to the vectors of the instrument’s flame: the position, angle, width, length, intensity and variance. We can use the index to spot duplicates in the archive, or match new instruments with existing ones. Don’t get me wrong, this technology won’t ‘identify’ instruments but we expect that it will tell us when an instrument already exists in the archive.

We can use the index to spot duplicates in the archive, or match new instruments with existing ones.

Can we map and index the features of an instrument like we do a fingerprint?

As a final step, sometime in the future, AI will probably be able to predict the more prolific and iconic makers. We’re a long long way away from this last step but it’s not an impossibility. Personally, I think a computer will be able to identify a Vuillaume or a Roth before too long. The human eye can do that without much training because instruments by these makers are abundant and their antiquing and style are so distinctive. Neural-networks are only limited by the quantity, quality, and consistency of the data you feed them. Someday we may be surprised what AI can do.

Next week, Benoît explains his research. Disclaimer: it’s a technical article, so we are taking a chance here by deviating from the Carteggios’ usual mission but we think you’ll enjoy the challenge of something truly futuristic.